In 2005, when Martin Saerbeck was studying computer science at Bielefeld University in Germany, he programmed a service robot called BIRON. Mounted with a pan-tilt camera on top, BIRON was able to follow a human pointing gesture and focus on the object pointed at. On one occasion, however, BIRON’s camera lost track of Saerbeck’s hand and the robot appeared to be sleeping or to have lost interest. Without thinking, Saerbeck waved his hand in front of BIRON and said “Wake up!”

Martin Saerbeck is developing a tutoring application using the iCAT research platform

“But then, I thought, ‘What am I doing?’” says Saerbeck. “I didn’t program waving detection so I should have known it wouldn’t move, but it was natural for me to talk to the robot in that way.” The experience is a demonstration of how people are naturally inclined to use normal human social skills when interacting with technology.

Inspired by such experiences, Saerbeck went on to develop a series of programs and architectures that enable robots to mimic human-to-human communication in a natural and readily understandable manner. “I don’t want to think too much about how to interact with the device or how to control it,” he says. Saerbeck, now a research scientist at the A*STAR Institute of High Performance Computing, is interested in developing robots that can be programmed to express words or reactions in response to a dialog with a human instead of simply responding to a few preset keywords. The goal is to enable the user to understand the state that the current state of the robot in a natural way.

In recent years, ‘social’ robots—cleaning robots, nursing-care robots, robot pets and the like—have started to penetrate into people’s everyday lives. Saerbeck and other robotics researchers are now scrambling to develop more sophisticated robotic capabilities that can reduce the ‘strangeness’ of robot interaction. “When robots come to live in a human space, we need to take care of many more things than for manufacturing robots installed on the factory floor,” says Haizhou Li, head of the Human Language Technology Department at the A*STAR Institute for Infocomm Research. “Everything from design to the cognitive process needs to be considered.”

Mimicking human interaction

Haizhou Li (right) introduces the capabilities of OLIVIA—Singapore’s first social robot

Li leads the ASORO program, which was launched in 2008 as A*STAR’s first multi-disciplinary team for robot development covering robotic engineering, navigation and mechatronics, computer vision and speech recognition. The program’s 35 members have developed seven robots, including a robot butler and a robot home assistant. Their flagship robot is OLIVIA, a robot receptionist who also acts as a research platform for evaluating various technologies related to social robotics.

Li unveiled the latest version of OLIVIA—‘OLIVIA 2.1’—at the RoboCup 2010 competition in Singapore. In her robotic receptionist mode, OLIVIA welcomes guests as a robotic receptionist and responds to a few key phrases such as “Can you introduce yourself?” She is also able to track human faces and eyes, and detect the lip motions of speakers. Eight head-mounted microphones enable OLIVIA to accurately locate the source of human speech and turn to the speaker. Intriguingly, OLIVIA even performs certain gestures learned from human demonstrators. Li is now discussing collaborations with Saerbeck to upgrade the OLIVIA platform.

One of the core premises of enhanced human–robot interaction is the concept of ‘believability’, says Saerbeck. A robot is controlled by a highly sophisticated and technical architecture of programs, sensors and actuators, and without detailed attention to the robot’s ‘animation’, the robot can appear mechanical and alien. For example, if a vacuum cleaning robot were to bump into an object and say “Ouch,” people would understand that it is simulating that it was hurt. However, static animations are not sufficient to give the impression of a life-like character. If the robot continuously bumped and repeated the same reaction, the animation would no longer be convincing. “What we are investigating now is how we can take context into account, and how behaviors can develop over time,” says Saerbeck. The research is guided by psychology, social science and linguistics to create models for appropriate actions using animation frameworks. He is also working on more sophisticated programming so that robots can autonomously cope with a wider range of situations instead of resorting to conventional programming of prescribed sequences of actions that humans are expected to perform.

Help from a robotutor

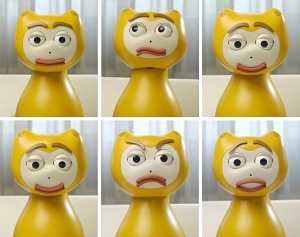

The interactive iCAT robot for language teaching

Bringing all these technological elements together, Saerbeck is currently developing a robotic tutor that assists vocabulary-learning tasks for school children. The project dates back to the time when he worked at Philips Research in the Netherlands. In one experiment, his team divided 16 children aged 10–11 years into two groups, and varied the degree of social interaction with a cat-shaped robot named iCAT. All of the children had good language skills and were given the same artificial language to study. The result was that children in the class with a more socially responsive robot scored significantly higher than the children that interacted with a robot in the style of current learning programs. The children with the social iCAT also showed significantly higher intrinsic and task motivation.

At A*STAR, Saerbeck is still using iCAT as a prototype platform. His team is now planning to build a completely new desktop-based static robot tailored for tutoring applications and equipped with specialized hardware for teaching. The design has yet to be fixed, but the researchers are evaluating various technologies including touch screens, flash cards, projectors and gesture-based interfaces.

Meanwhile, Li is also upgrading OLIVIA to enable her to learn from speakers and to understand naturally spoken queries. Ultimately, he plans OLIVIA to be able to deliver information or take actions such as making a taxi booking and shaking hands. However, Li admits that there are many technological gaps that need to be filled before ‘OLIVIA 3.0’ becomes a reality. OLIVIA’s current technologies are already at a sufficiently advanced stage of development to attract commercial interest—aspects of OLIVIA have been applied in a commercial surveillance system. But, according to Li, there remains much scope for improvement, for example, in the accuracy of visual and speech understanding and real-time compliant control.

It’s all about context

Haizhou Li with OLIVIA

One of the biggest challenges is to improve robotic ‘attention’, says Li. “In human-to-human interaction, we share a natural concept of communication—we know when the conversation starts and ends, and when we can start talking in a group. We are now trying to facilitate this kind of ability in a robot.” Li’s team is developing new algorithms and cognitive processes that could enable a robot to engage in conversation with both visual and auditory attention, accompanied by natural body language.

As the context of communications is also influenced by the robot design, OLIVIA 3 is being designed in a completely different style from the version demonstrated at RoboCup 2010. The design idea is based on market research garnering public opinion about how people wanted a robotic receptionist to look. As opinions varied with age, ethics and social status, OLIVIA 3’s final appearance is still under discussion. “We also have to consider cultural contexts when we build robots—our aim is to develop social robots that people can interact with comfortably,” says Li.